Contact us

Call us at

Available 9 AM - 5 PM CET Business Days

Download

Download Manuals, Datasheets, Software and more:

Feedback

Automated Testing and Debugging

High-speed digital standards are quickly evolving to support the performance demands of our data driven world. Next generation serial standards and data communication requirements are bringing new test challenges, pushing the limits of today’s compliance and debug tools. From design and simulation, analysis, debug, and compliance testing, Tektronix provides automated electrical test solutions to optimize performance, speed up validation cycles and accelerate time-to-market.

Accurate, Repeatable Testing of High-Performance Computing Standards

The demand for greater data throughput and increased memory capacity continues to grow in such data-intensive markets as data center, artificial intelligence (AI), and high-performance computing. Higher data transfer rates lead to complex designs that push the boundaries of signal integrity and require higher-performance measurements for compliance, debugging and validation. Tektronix's automated test solutions equip engineers to test their latest designs by addressing these challenges with accuracy and repeatability.

Reduced Time to Test Consumer Technologies

The pace of innovation is stimulating the demand for newer and faster consumer devices. The standards underlying their development introduce new test challenges in terms of increased data rates and design complexity. Tektronix test solutions provide accurate, repeatable measurements and reduced test times for conformance to the latest specifications.

Display (DisplayPort and HDMI)

Comprehensive Tools for Ethernet Device Designs

Ethernet provides network connectivity ranging from hyper-scale data centers and enterprise systems to automotive and industrial applications. Tektronix offers comprehensive toolsets for testing, developing, and debugging the physical layer of IEEE 802.3 Ethernet devices in these ecosystems.

Advanced Signal Integrity Measurement and Analysis Tools

Tektronix offers a powerful suite of analysis tools for today’s high-speed serial standards – built to handle complex, multi-source configurations with speed and flexibility. With intuitive workflows, high-density measurement capabilities, and industry-leading visualization, Tektronix tools enable engineers to analyze more data, more easily, in less time.

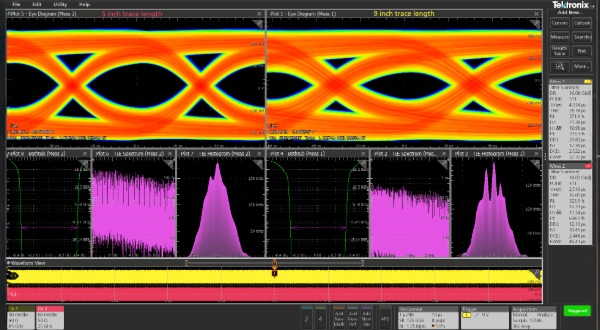

Jitter and Eye Diagram Analysis (opt. DJA)

De-embedding, Embedding and Equalization (opt. SIM Advanced)

For bandwidths above 25 GHz, DPOJET, PAMJET, and SDLA remain available on the DPO70000 Series platform to support high bandwidth use cases.