Why ENOB Tells You More than ADC Bit Depth

.jpg?h=300&iar=0&w=750)

Effective resolution matters (way) more than nominal ADC bits in real-world signal measurements.

Reality turned into numbers

When we look at a picture, our mind makes the connection between the image and the fact that it is a representation of something existing in the real world. We assume it to be an accurate, true representation of a landscape, or a portrait, or anything represented for what it is, in “reality”.

A similar process happens when, in the obvious impossibility of “visualizing” electrons flowing in a circuit, we assume that a waveform represented on the screen of an oscilloscope is actually an accurate representation of the voltage over time at a specific node.

This trust was not questioned when instruments were analog; the electron beam tracing the phosphor screen was “painting”, like a brush, a true representation of another analog entity, the voltage. Even then, engineers questioned whether the “brush” was too thick with regards to the “real” signal and tried to search for better display quality to make the trace thinner, and hence more accurate. The analog signal path was trusted as well, accepting the noise of the amplifier as something inevitable.

Figure 1. Example of analog oscilloscope trace.

Then came the “bits”. In the digital world, any visual point of the waveform is a result of a complex numerical calculation including filtering, quantization, interpolation, rasterization, etc.

Voltage signals were processed into something else, and that “something else” was presented to our eyes. The questioning about precision got way more complex and became a huge topic of discussion, so much so that some nostalgic devotees of the analog world resisted the shift toward digital signal processing.

But for anyone else, the acquired “real world signal” became the result of adjustment, conditioning, processing and finally “spitting out” a number, turning on a pixel on the screen. We traded reality for “realistic” in the name of better resolution.

But what is resolution?

Resolution is the smallest measurable change in a physical quantity that you can detect and display. Since instruments are physical entities as well, their resolution is also intrinsically limited by their physical constraints. Analog instruments were no different, suffering from mechanical precision limits and intrinsic factors like noise. They also had the extra factor of not giving you a number but a needle position or a light beam on a graduated scale to interpret. The definition of resolution in signal processing did not change, but factors affecting it did. The good thing is that these factors can be categorized, estimated and quantified.

In this blog we will focus on just one type of resolution, the one related to amplitude measurements of voltages. But resolution is a factor affecting also time, space, or any physical quantity.

Amplitude resolution, wrongly defined

Several books give as a definition of amplitude resolution for (digital, now implicit) oscilloscopes the number of bits (or “bit depth”) of its ADC, analog to digital converter. This definition is wrong; it has confused (and still confuses) engineers but is really loved by marketers who want to show simple things for the sake of simplicity.

The number of bits of an ADC determines just one thing: the theoretical upper limit of amplitude resolution. Nothing other than that.

Now imagine two athletes; one says she cannot jump higher than 1 meter, no matter what, and the second says she cannot jump higher than 80 centimeters. If you had to pick one for your team you would naturally go for the first, right? That’s what I would do, too. But then you look at the track records for each athlete and discover that the first athlete has never jumped higher than 60 cm while the second athlete has jumped 65 cm. If you could reconsider your initial choice, which one would you select now? For oscilloscopes’ amplitude resolution, the story is the same.

Is an ADC as good as its number of bits?

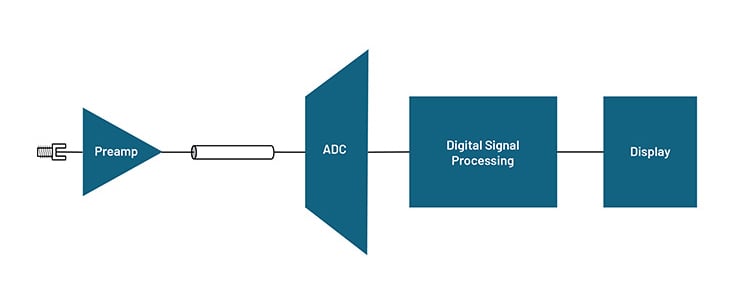

Spoiler alert: nope. Regardless of its number of bits, the ADC must be accurately designed to ensure linearity, minimal internal clock jitter and other factors affecting interpolation efficiency. But there is more here: the front end of an oscilloscope is not merely composed of the ADC. Before reaching the ADC to be digitized, the signal needs to pass through the front end of the oscilloscope. Attenuators and amplifiers are present in this stage and can highly affect the signal with noise and distortion.

Figure 2. Typical digital oscilloscope signal path structure.

To be precise we should also consider what happens to the digitized signal after the ADC. In fact, this is also a critical stage. What is important to keep in mind is that instrument designers not only don’t start their job at the ADC stage, but do not even finish it. They need to rapidly and correctly store the digitized data into memory, they need to apply intelligence to it with complex triggering conditions implemented, and last, but not least, whatever they do to these numbers like averaging, filtering, rendering and “who knows what”, must be meaningful and precise. In conclusion, an ADC is not as good as its number of nominal bits, and an oscilloscope is not as good as its ADC.

So, how do I select the right athlete for my team?

There is one clever idea in the story above: using some “bits formatted” metric to quantify the amplitude resolution and how finely instruments can differentiate voltage levels. Now that we know that using the nominal bit depth only determines how an ideal ADC (placed after an ideal front end with no noise) can determine the discrete amplitude voltage level resolvable by an oscilloscope over its full voltage scale, is there anything we could use starting from that?

Real-world ADC bit depth calculation: Welcome to ENOB

Some formulas have been identified throughout the years to calculate a real-world ADC resolution, and they all claim to calculate something defined as ENOB, which stands for Effective Number of Bits.

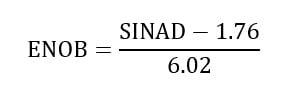

The concept of ENOB is quite old; IEEE 1057 was first published in 1989, and soon after the test and measurement industry started adopting it. The reference included the following famous formula and its results started appearing in oscilloscope datasheets.

Looking at the formula, it seems that we just moved the ENOB ambiguity to SINAD, so SINAD needs to be defined. SINAD stands for Signal to Noise and Distortion Ratio and it is a measure of the degradation of signal caused by noise and non-linearities or distortion in the processing… process.

It is indeed an interesting metric to evaluate an ADC, since it combines the effect of both noise and distortion, referenced to the power of the “useful” input when it is a pure (ideal) sine wave.

If you apply a 1MHz sine wave having 2mW of fundamental signal power and you estimate 20µW of distortion, the SINAD is 20dB. Applying the formula, this means that you have an ENOB of 3 bits at 1MHz.

Now you apply a 100MHz sine wave with the same power, and you see the total degradation rising to 80µW. ENOB drops close to only 2 bits. Imagine getting these data for an ADC whose nominal declared number of bits is 8, regardless of the input frequency.

Please consider that in order to measure SINAD you need to use other instruments whose SINAD has to be measured as well. As you can imagine the topic is open to discussion, arguments and debates among the engineering community. There are plenty of papers discussing these formulas in detail, however what matters here is to understand a few key aspects.

What engineers selecting oscilloscope must know

We already clarified the first and most important smokescreen: nominal ADC bit depth is not a quantitative indicator of amplitude resolution. Oscilloscopes that use a 12-bit ADC may have an actual resolution of less than 3 bits in some operating situations. It is also true that an oscilloscope with 10-bit nominal resolution ADC can be better than one using a 12-bit nominal resolution ADC.

Since ENOB is much lower than the ADC bit-depth, a wise choice is made only after deep spec analysis and practical lab tests to prove measurements.

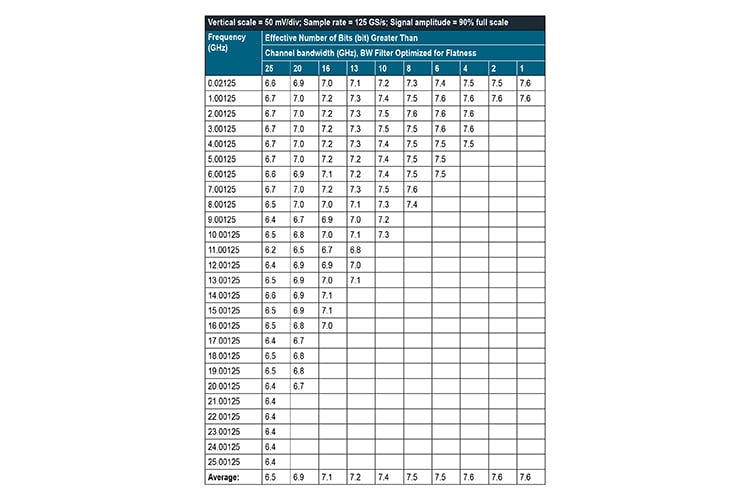

The engineering community largely agrees on ENOB as a concept but might disagree on which ENOB formulas to apply in different measurement contexts. However, lab tests of ENOB can be used as a metric to benchmark different oscilloscopes. And it is important to remember that ENOB, different from the number of nominal bits, is not a fixed number but a spec that varies over frequency, amplitude and general test conditions, so a fair and serious comparison requires a deep dive into technical material. Lab tests in controlled conditions and field tests may vary; it is important to understand which specs the vendor is showing you and under what conditions the claimed numbers have been obtained.

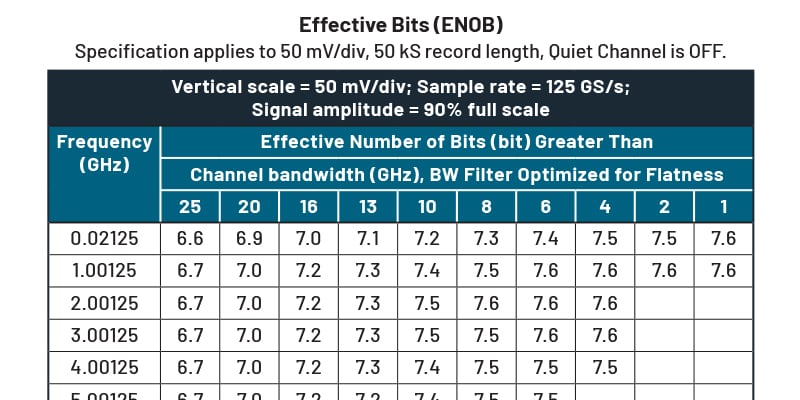

Effective Bits (ENOB)

Specification applies to 50mV/div, 50kS record length, Quiet Channel is OFF.

Figure 3: An example of ENOB specification table as provided in the 7 Series DPO Specifications and Performance Verification technical reference manual. The manual provides specifications and performance verification procedures for verifying guaranteed specifications for the DPO714AX.

Finally, vertical resolution should not be considered in isolation but as part of the broader context of application requirements. Considerations like selectable vertical sensitivity, bandwidth, sample rate, jitter, and noise floor might be more critical to evaluate in detail. Before making up your mind it is always recommended to spend some time with an experienced applications engineer to help make an informed decision.