Video Measurements

Monitoring and measuring tools

We know that digital television is a stream of numbers, and this may lead to some unnecessary apprehension. Everything seems to be happening really fast, and we need some help to sort everything out. Fortunately, video, and especially the ancillary information supporting video, is quite repetitive, so all we need is the hardware to convert this high-speed numeric data to something we can study and understand. Why not just convert it to something familiar, like analog video?

Digital video, either standard definition or the newer high-definition studio formats, is very much the same as its analog ancestor. Lots of things have improved with time, but we still make video with cameras, from film, and today, from computers. The basic difference for digital video is the processing early in the chain that converts the analog video into numeric data and attaches ancillary data to describe how to use the video data. For live cameras and telecine, analog values of light are focused on sensors, which generates an analog response that is converted somewhere along the line to numeric data. Sometimes we can get to this analog signal for monitoring with an analog waveform monitor, but more often the video will come out of the equipment as data. In the case of computer generated video, the signal probably was data from the beginning. Data travels from source equipment to destination on a transport layer. This is the analog transport mechanism, often a wire, or a fiber-optic path carrying the data to some destination. We can monitor this data directly with a high-bandwidth oscilloscope, or we can extract and monitor the data information as video.

Operationally, we are interested in monitoring the video. For this we need a high-quality waveform monitor equipped with a standardscomplaint data receiver to let us see the video in a familiar analog display. Tektronix provides several digital input waveform monitors including the WVR7120/7020/6020 series 1RU rasterizer (Figure 1) for standard/high-definition component digital video and the WFM6120/7020/7120 (Figure 2) series 3RU half-rack monitor which is configurable for any of the digital formats in common use today.

Technically, we may want to know that the camera or telecine is creating correct video data and that ancillary data is accurate. We may also want to evaluate the analog characteristics of the transport layer. The Tektronix VM700T with digital option, the WFM6120 and WFM7120 allow in-depth data analysis and a direct view of the eye-pattern shape of the standard definition transport layer. The new WFM7120/6120 series high-definition monitors provide tools for both transport and data layer technical evaluation.

A test signal generator serves two purposes. It provides an ideal reference video signal for evaluation of the signal processing and transmission path, and it provides an example of the performance you should expect of today’s high-quality system components. Some generation equipment, such as the Tektronix TG700 signal generator platform shown in Figure 3, provides options for both analog and digital, standard and high-definition signal formats.

These tools allow an operator to generate video that is completely compatible with the transmission system, video processing devices, and finally with the end viewer’s display. Perhaps most important, these tools provide an insight into the workings of the video system itself that increase technical confidence and awareness to help you do your job better.

Monitoring digital and analog signals

There is a tendency to think of any video signal as a traditional time/amplitude waveform. This is a valid concept and holds for both analog and digital. For analog video, the oscilloscope or waveform monitor displays a plot of signal voltage as time progresses. The waveform monitor is synchronized to show the desired signal characteristic as it occurs at the same horizontal position on the waveform monitor display each time it occurs, horizontally in the line, or vertically in the field. A digital waveform monitor shows the video information extracted from the incoming data signal in the same manner as the analog waveform monitor.

You see the same information in the same way from the analog or digital signals. For analog you see the direct signal; for digital you see the signal described by the data. Operationally, you use the monitor to make the same video evaluations.

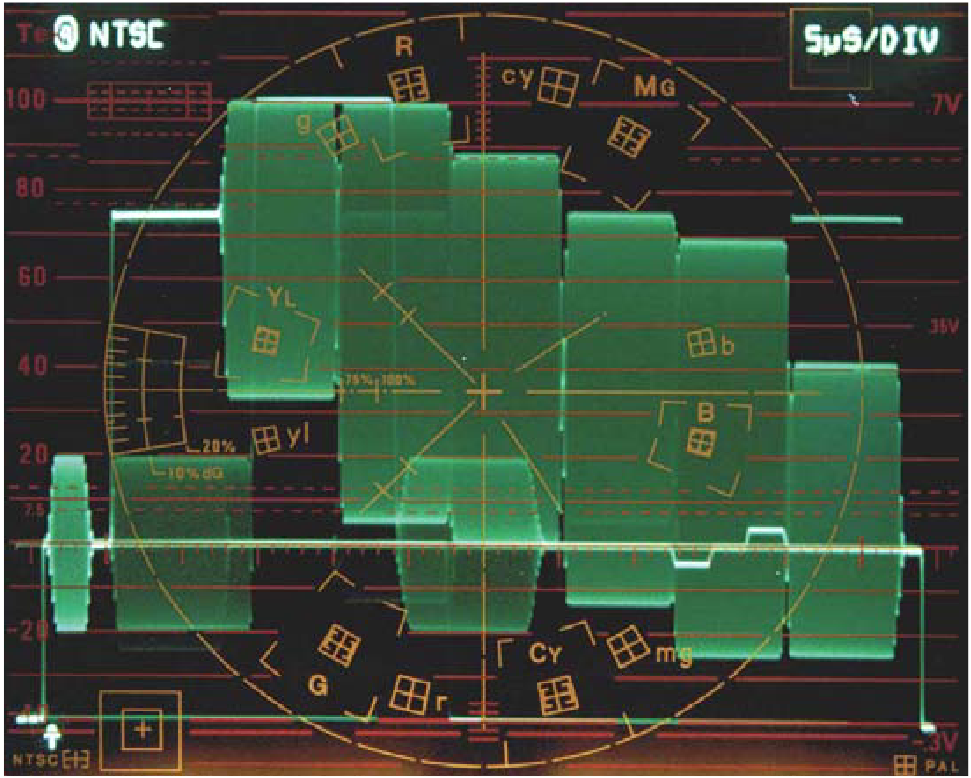

Additional measurements may be unique to the system being monitored. You may want to demodulate the NTSC or PAL color information for display on an analog vectorscope. You may want to see an X vs. Y display of the color-difference channels of a digital component signal to simulate an analog vector display without creating or demodulating a color subcarrier. You may want to observe the data content of a digital signal directly with a numeric or logic level display. And you will want to observe gamut of the analog or digital signal. Gamut is covered in greater detail in Appendix A – Gamut, Legal, Valid.

Assessment of video signal degradation

Some of the signal degradations we were concerned with in analog NTSC or PAL are less important in standard definition component video. Degradations become important again for even more basic reasons as we move to high-definition video. If we consider the real analog effects, they are the same. We sought signal integrity in analog to avoid a degradation of color video quality, but in highdefinition we can start to see the defect itself.

Video amplitude

The concept of unity gain through a system has been fundamental since the beginning of television. Standardization of video amplitude lets us design each system element for optimum signal-to-noise performance and freely interchange signals and signal paths. A video waveform monitor, a specialized form of oscilloscope, is used to measure video amplitude. When setting analog video amplitudes, it is not sufficient to simply adjust the output level of the final piece of equipment in the signal path. Every piece of equipment should be adjusted to appropriately transfer the signal from input to output.

In digital formats, maintenance of video amplitude is even more important. Adequate analog video amplitude into the system assures that an optimum number of quantization levels are used in the digitizing process to reproduce a satisfactory picture. Maintaining minimum and maximum amplitude excursions within limits assures the video voltage amplitude will not be outside the range of the digitizer. Aside from maintaining correct color balance, contrast, and brightness, video amplitude must be controlled within gamut limits legal for transmission and valid for conversion to other video formats. In a properly designed unity-gain video system, video amplitude adjustments will be made at the source and will be correct at the output.

In the analog domain, video amplitudes are defined, and the waveform monitor configured to a standard for the appropriate format. NTSC signals will be 140 IRE units, nominally one volt, from sync tip to white level. The NTSC video luminance range (Figure 4) is 100 IRE, nominally 714.3 mV, which may be reduced by 53.5 mV to include a 7.5 IRE black level setup. Depending on color information, luminance plus chrominance components may extend below and above this range. NTSC sync is –40 IRE units, nominally –285.7 mV from blanking level to sync tip. The NTSC video signal is generally clamped to blanking level and the video monitor is set to extinguish at black level.

PAL signals are also formatted to one-volt sync tip to white level, with a video luminance range of 700 mV, with no setup. PAL sync is –300 mV. The signal is clamped, and the monitor brightness set to extinguish at black level. Chrominance information may extend above and below the video luminance range.

Video amplitude is checked on a stage-by-stage basis. An analog test signal with low-frequency components of known amplitude (such as blanking and white levels in the color bar test signal) will be connected to the input of each stage and the stage adjusted to replicate those levels at the output stage.

Regulatory agencies in each country, with international agreement, specify on-air transmission standards. NTSC, PAL, and SECAM video transmitters are amplitude-modulated with sync tip at peak power and video white level plus chroma extending towards minimum power. This modulation scheme is efficient and reduces visible noise, but is sensitive to linearity effects. Video levels must be carefully controlled to achieve a balance of economical fullpower sync tip transmitter output and acceptable video signal distortion as whites and color components extend towards zero carrier power. If video levels are too low, the video signal/noise ratio suffers and electric power consumption goes up. If video levels are too high, the transmitter performs with greater distortion as the carrier nears zero power, and performance of the inter-carrier television audio receiver starts to fail.

Signal amplitude

In an analog system, the signal between studio components is a changing voltage directly representing the video. An analog video waveform monitor of the appropriate format makes it easy to view the voltage level of the analog video signal in relation to distinct timing patterns.

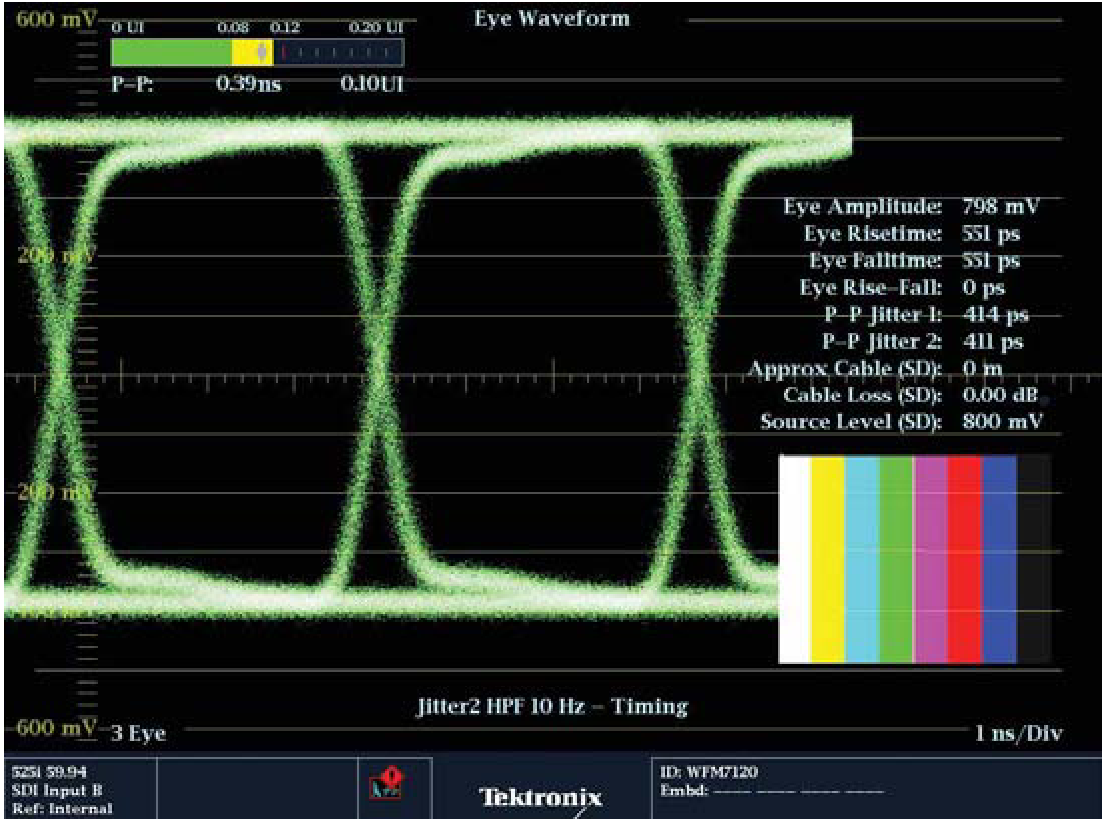

In a digital video system, the signal is a data “carrier” in the transport layer; a stream of data representing video information. This data is a series of analog voltage changes (Figures 5 and 6) that must be correctly identified as high or low at expected times to yield information on the content. The transport layer is an analog signal path that just carries whatever is input to its destination. The digital signal starts out at a level of 800 mV and its spectral content at half the clock frequency at the destination determines the amount of equalization applied by the receiver.

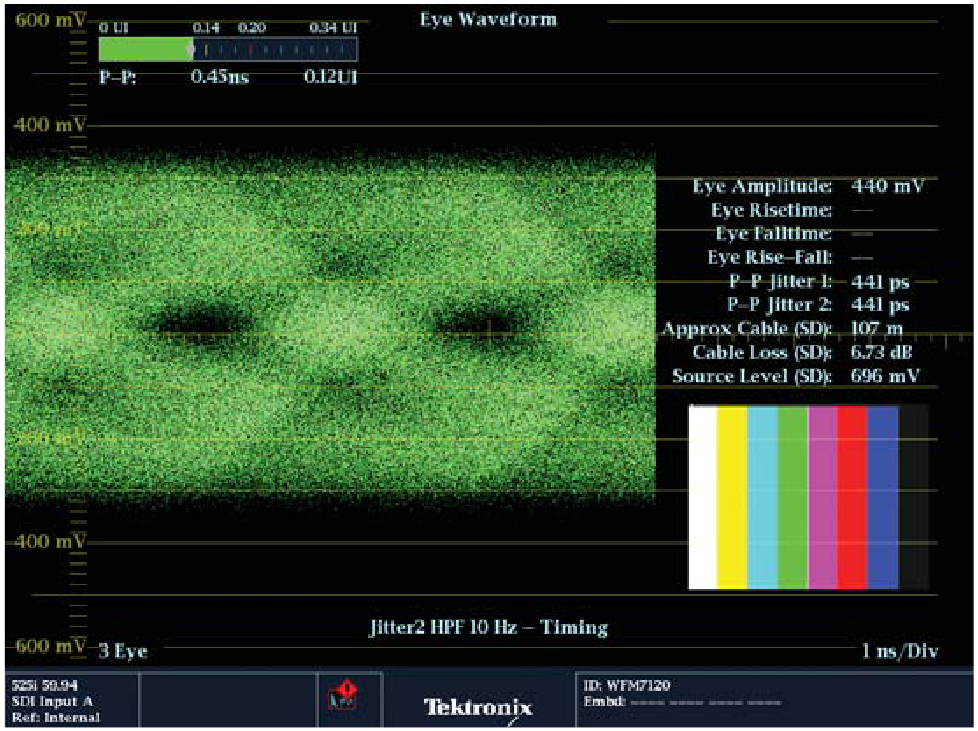

Digital signals in the transport layer can be viewed with a high frequency oscilloscope or with a video waveform monitor such as the Tektronix WFM7120/WFM6120 or WVR7120 with EYE option for either standard or high-definition formats. In the eye pattern mode, the waveform monitor operates as an analog sampling oscilloscope with the display swept at a video rate. The equivalent bandwidth is high enough, the return loss great enough, and measurement cursors appropriately calibrated to accurately measure the incoming data signal. The rapidly changing data in the transport layer is a series of ones and zeros overlaid to create an eye pattern. Eye pattern testing is most effective when the monitor is connected to the device under test with a short cable run, enabling use of the monitor in its non-equalized mode. With long cable runs, the data tends to disappear in the noise and the equalized mode must be used. While the equalized mode is useful in confirming headroom, it does not provide an accurate indicator of the signal at the output of the device under test. The PHY option also provides additional transport layer information such as jitter display and automated measurements of eye amplitude and provides a direct measurement readout of these parameters.

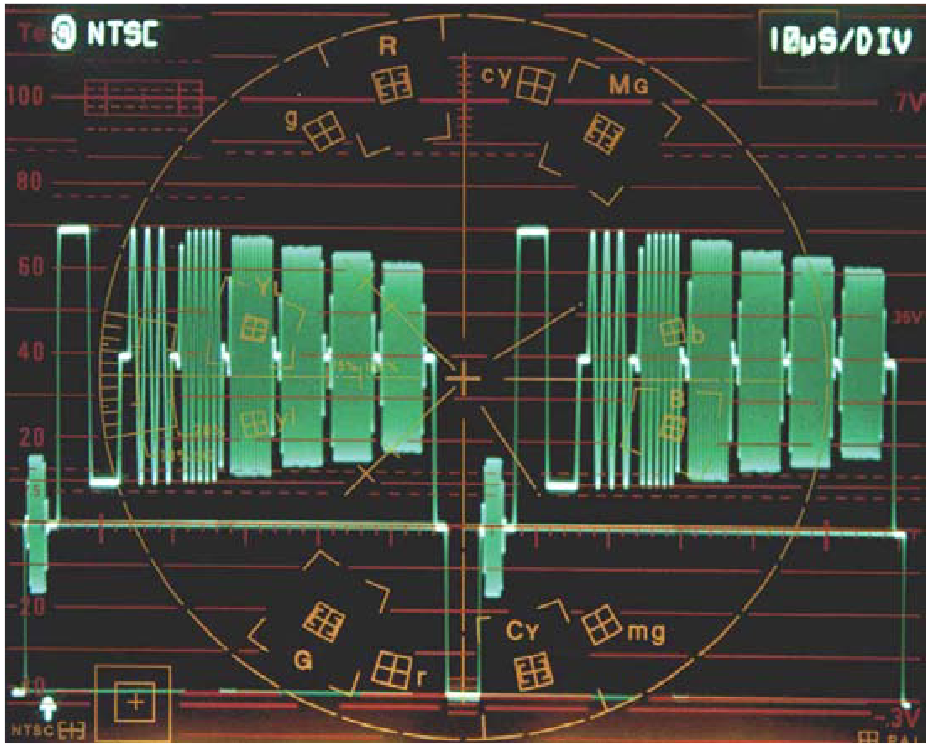

Since the data transport stream contains components that change between high and low at rates of 270 Mb/s for standard definition ITU-R BT.601 component video, up to 1.485 Gb/s for some high-definition formats (SMPTE 292M), the ones and zeros will be overlaid (Figure 72) for display on a video waveform monitor. This is an advantage since we can now see the cumulative data over many words, to determine any errors or distortions that might intrude on the eye opening and make recovery of the data high or low by the receiver difficult. Digital waveform monitors such as the Tektronix WFM7120/6120 series for multiple digital formats provide a choice of synchronized sweeps for the eye pattern display so word, line, and field disturbances may be correlated.

The digital video waveform display that looks like a traditional analog waveform (baseband video) is really an analog waveform recreated by the numeric data in the transport layer. The digital data is decoded into high-quality analog component video that may be displayed and measured as an analog signal. Although monitoring in the digital path is the right choice, many of the errors noted in digital video will have been generated earlier in the analog domain.

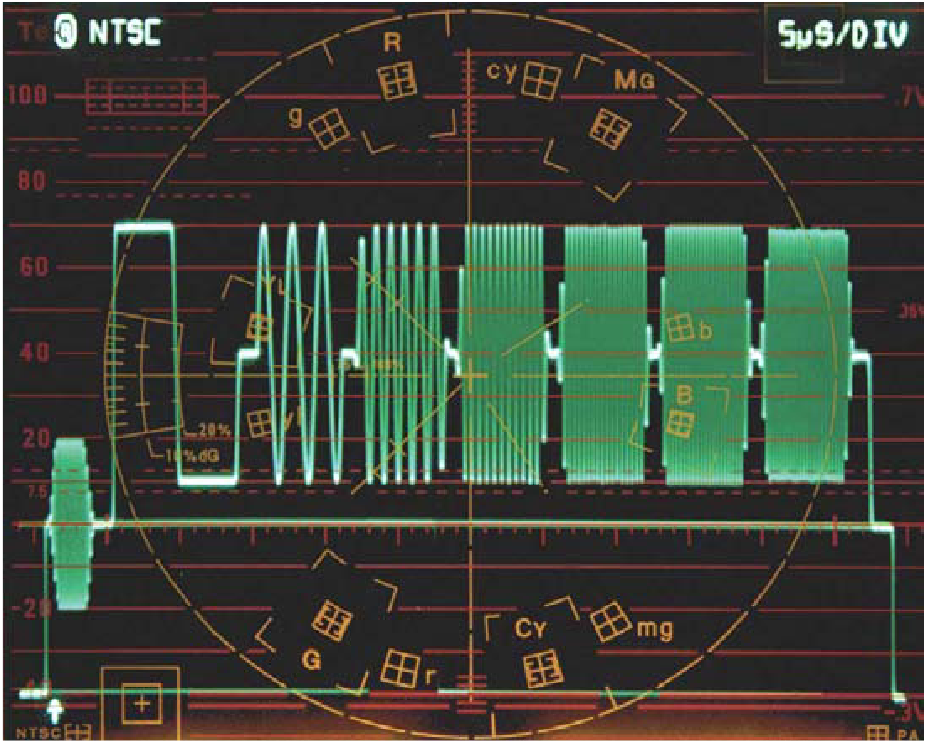

Frequency response

In an analog video system, video frequency response will be equalized where necessary to compensate loss of high-frequency video information in long cable runs. The goal is to make each stage of the system “flat” so all video frequencies travel through the system with no gain or loss. A multiburst test signal (Figure 7) can be used to quickly identify any required adjustment. If frequency packets in the multiburst signal are not the same amplitude at the output stage (Figure 8), an equalizing video distribution amplifier may be used to compensate, restoring the multiburst test signal to its original value.

In a digital system, high-frequency loss affects only the energy in the transport data stream (the transport layer), not the data numbers (the data layer) so there is no effect on video detail or color until the high-frequency loss is so great the data numbers cannot be recovered. The equalizer in the receiver will compensate automatically for high-frequency losses in the input. The system designer will take care to keep cable runs short enough to achieve near 100% data integrity and there is no need for frequency response adjustment. Any degradation in video frequency response will be due to analog effects.

Group delay

Traditional analog video designs, for standard definition systems, have allowed on the order of 10 MHz bandwidth and have provided very flat frequency response through the 0-6 MHz range containing the most video energy. Group-delay error, sometimes referred to as envelope delay or frequency-dependent phase error, results when energy at one frequency takes a longer or shorter time to transit a system than energy at other frequencies, an effect often associated with bandwidth limitations. The effect seen in the picture would be an overshoot or rounding of a fast transition between lower and higher brightness levels. In a composite NTSC or PAL television system, the color in the picture might be offset to the left or right of the associated luminance. The largest contributors to group-delay error are the NTSC/PAL encoder, the sound-notch filter, and the vestigial-sideband filter in the high-power television station transmitter, and of course the complementary chroma bandpass filters in the television receiver’s NTSC or PAL decoder. From an operational standpoint, most of the effort to achieve a controlled group delay response centers in the analog transmitter plant. It is routine, however, to check group delay, or phase error, through the analog studio plant to identify gross errors that may indicate a failure in some individual device. Group delay error in a studio plant is easily checked with a pulse and bar test signal (Figure 9). This test signal includes a halfsinusoidal 2T pulse and a low-frequency white bar with fast, controlled rise and fall times. A 2T pulse with energy at half the system bandwidth causes a low level of ringing which should be symmetrical around the base of the pulse. If the high-frequency energy in the edge gets through faster or slower than the lowfrequency energy, the edge will be distorted (Figure 10). If highfrequency energy is being delayed, the ringing will occur later, on the right side of the 2T pulse.

The composite pulse and bar test signal has a feature useful in the measurement of system phase response. In composite system testing, a 12.5T or 20T pulse modulated with energy at subcarrier frequency is used to quickly check both chroma-luma delay and relative gain at subcarrier frequency vs. a low frequency. A flat baseline indicates that both gain and delay are correct. Any bowing upward of the baseline through the system indicates a lower gain at the subcarrier frequency. Bowing downward indicates higher gain at the subcarrier frequency. Bowing upward at the beginning and downward at the end indicates high-frequency energy has arrived later and vice versa. In a component video system, with no color subcarrier, the 2T pulse and the edge of the bar signal is of most interest.

A more comprehensive group delay measurement may be made using a multi-pulse or sin x/x pulse and is indicated when data, such as teletext or Sound-in-Sync is to be transmitted within the video signal.

Digital video system components use anti-alias and reconstruction filters in the encoding/decoding process to and from the analog domain. The cutoff frequencies of these internal filters are about 5.75 MHz and 2.75 MHz for standard definition component video channels, so they do react to video energy, but this energy is less than is present in the 1 MHz and 1.25 MHz filters in the NTSC or PAL encoder. Corresponding cutoff frequencies for filters in digital high-definition formats are about 30 MHz for luma and 15 MHz for chroma information. The anti-alias and reconstruction filters in digital equipment are well corrected and are not adjustable operationally.

Non-linear effects

An analog circuit may be affected in a number of ways as the video operating voltage changes. Gain of the amplifier may be different at different operating levels (differential gain) causing incorrect color saturation in the NTSC or PAL video format. In a component analog format, brightness and color values may shift.

Differential gain

Differential gain is an analog effect, and will not be caused or corrected in the digital domain. It is possible, however, that digital video will be clipped if the signal drives the analog-to-digital converter into the range of reserved values. This gamut violation will cause incorrect brightness of some components and color shift. Please refer to Appendix A – Gamut, Legal, Valid.

Differential phase

Time delay through the circuit may change with the different video voltage values. This is an analog effect, not caused in the digital domain. In NTSC this will change the instantaneous phase (differential phase) of the color subcarrier resulting in a color hue shift with a change in brightness. In the PAL system, this hue shift is averaged out, shifting the hue first one way then the other from line to line. The effect in a component video signal, analog or digital, may produce a color fringing effect depending on how many of the three channels are affected. The equivalent effect in high definition may be a ring or overshoot on fast changes in brightness level.

Digital System Testing

Stress testing

Unlike analog systems that tend to degrade gracefully, digital systems tend to work without fault until they crash. To date, there are no in-service tests that will measure the headroom of the SDI signal. Out-of-service stress tests are required to evaluate system operation. Stress testing consists of changing one or more parameters of the digital signal until failure occurs. The amount of change required to produce a failure is a measure of the headroom. Starting with the specifications in the relevant serial digital video standard (SMPTE 259M or SMPTE 292M), the most intuitive way to stress the system is to add cable until the onset of errors. Other tests would be to change amplitude or risetime, or add noise and/or jitter to the signal. Each of these tests is evaluating one or more aspects of the receiver performance, specifically automatic equalizer range and accuracy and receiver noise characteristics. Experimental results indicate that cable-length testing, in particular when used in conjunction with the SDI check field signals described in the following sections, is the most meaningful stress test because it represents real operation. Stress testing the receiver’s ability to handle amplitude changes and added jitter are useful in evaluating and accepting equipment, but not too meaningful in system operation. Addition of noise or change in risetime (within reasonable bounds) has little effect on digital systems and is not important in stress tests.

Cable-length stress testing

Cable-length stress testing can be done using actual coax or a cable simulator. Coax is the simplest and most practical method. The key parameter to be measured is onset of errors because that defines the crash point. With an error measurement method in place, the quality of the measurement will be determined by the sharpness of the knee of the error curve. An operational check of the in-plant cabling can be easily done using the waveform monitor. This in-service check displays key information on the signal as it leaves the previous source and how it survives the transmission path. Figure 11 shows the effect of additional length of cable to the signal.

SDI check field

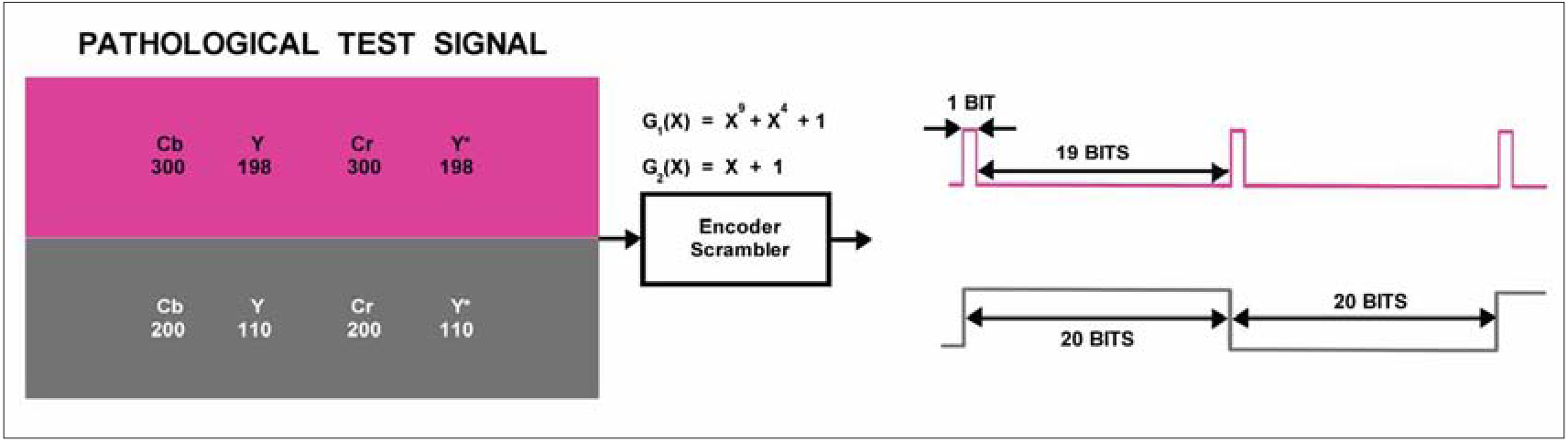

The SDI Check Field (also known as a “pathological signal”) is a full-field test signal and therefore must be done out-of-service. It’s a difficult signal for the serial digital system to handle and is a very important test to perform. The SDI Check Field is specified to have a maximum amount of low-frequency energy, after scrambling, in two separate parts of the field. Statistically, this low-frequency energy will occur about once per frame. One component of the SDI Check Field tests equalizer operation by generating a sequence of 19 zeros followed by a 1 (or 19 ones followed by 1 zero). This occurs about once per field as the scrambler attains the required starting condition, and when present it will persist for the full line and terminate with the EAV packet. This sequence produces a high DC component that stresses the analog capabilities of the equipment and transmission system handling the signal. This part of the test signal may appear at the top of the picture display as a shade of purple, with the value of luma set to 198h, and both chroma channels set to 300h. The other part of the SDI Check Field signal is designed to check phase locked loop performance with an occasional signal consisting of 20 zeros followed by 20 ones. This provides a minimum number of zero crossings for clock extraction. This part of the test signal may appear at the bottom of the picture display as a shade of gray, with luma set to 110h and both chroma channels set to 200h. Some test signal generators will use a different signal order, with the picture display in shades of green. The results will be the same. Either of the signal components (and other statistically difficult colors) might be present in computergenerated graphics so it is important that the system handle the SDI Check Field test signal without errors. The SDI Check Field is a fully legal signal for component digital but not for the composite domain. The SDI Check Field (Figure 12) is defined in SMPTE Recommend Practice RP178 for SD and RP198 for HD.

In-service testing

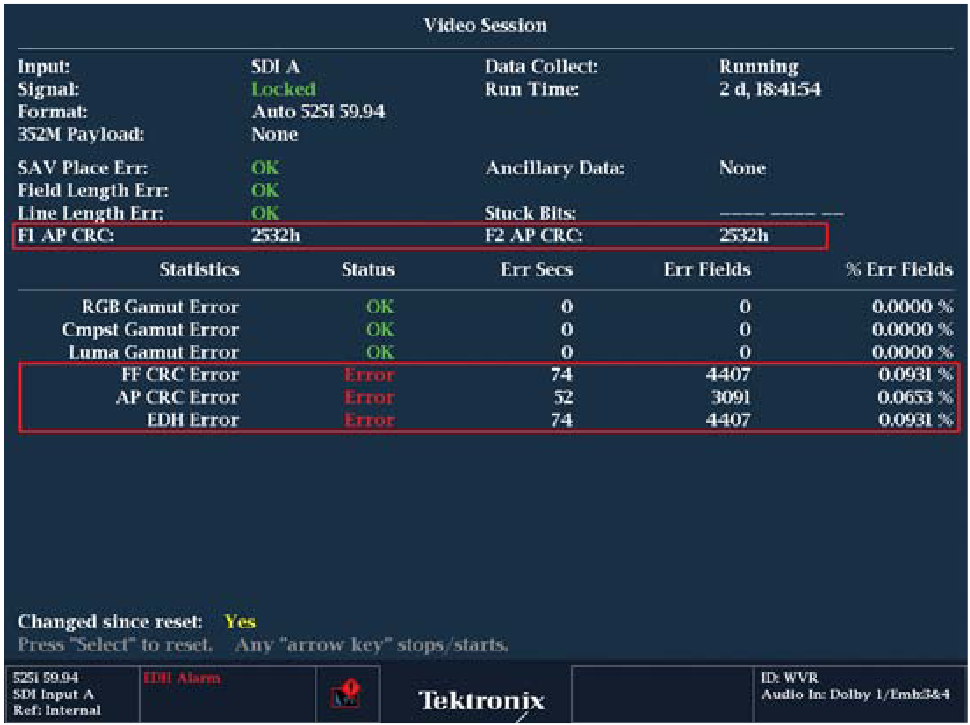

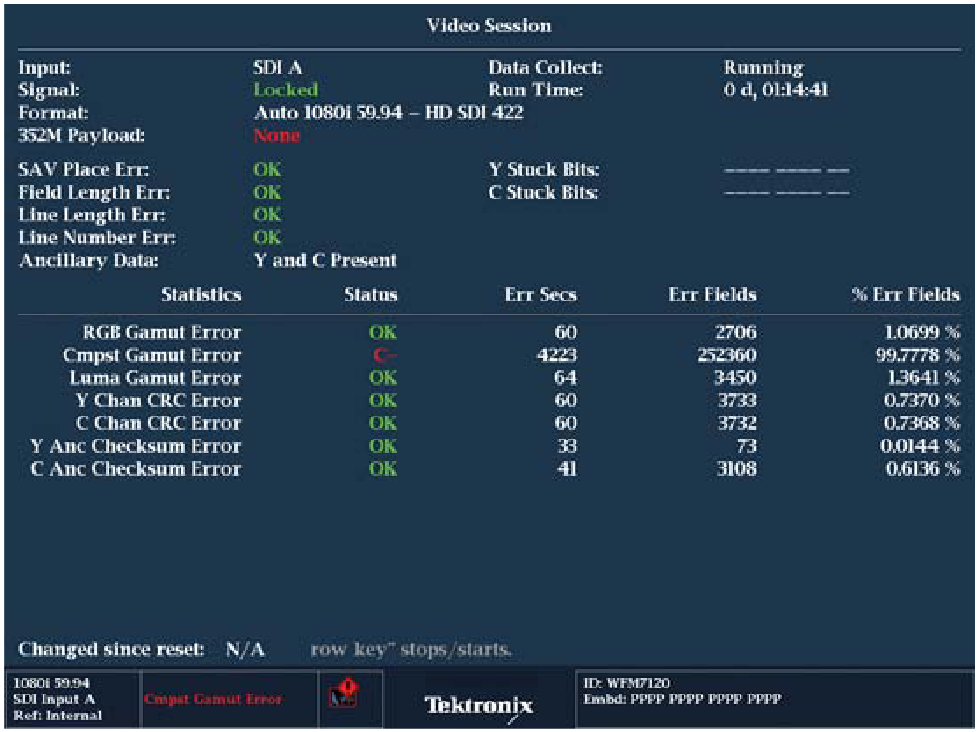

CRC (Cyclic Redundancy Coding) can be used to provide information to the operator or even sound an external alarm in the event data does not arrive intact. A CRC is present in each video line in high-definition formats, and may be optionally inserted into each field in standard definition formats. A CRC is calculated and inserted into the data signal for comparison with a newly calculated CRC at the receiving end. For standard definition formats, the CRC value is inserted into the vertical interval, after the switch point. SMPTE RP165 defines the optional method for the detection and handling of data errors in standard definition video formats (EDH Error Detection Handling). Full Field and Active Picture data are separately checked and a 16-bit CRC word generated once per field. The Full Field check covers all data transmitted except in lines reserved for vertical interval switching (lines 9-11 in 525, or lines 5-7 in 625 line standards). The Active Picture (AP) check covers only the active video data words, between but not including SAV and EAV. Half-lines of active video are not included in the AP check. Digital monitors may provide both a display of EDH CRC values and an alarm on AP or FF (Full Field) CRC errors (Figure 13). In high-definition formats, CRCs for luma and chroma follow EAV and line count ancillary data words. The CRC for high-definition formats is defined in SMPTE 292M to follow the EAV and line number words, so CRC checking is on a line-byline basis for Y-CRC and C-CRC. The user can then monitor the number of errors they have received along the transmission path. Ideally, the instrument will show zero errors indicating an error-free transmission path. If the number of errors starts to increase, the user should start to pay attention to the increase in errors. As the errors increase to one every hour or minute, this is an indication that the system is getting closer to the digital cliff. The transmission path should be investigated further to isolate the cause of the error before the system reaches the digital cliff and it becomes difficult to be able to isolate the error within the path. Figure 80 shows the video session display of the WFM7120 and the accumulated CRC errors are shown for both the Y and C channels of the high-definition signal. Within the display, not only are the number of errors counted, but the errors are also displayed in relation to the number of fields and seconds of the time interval being monitored. Resetting the time will restart the calculation and monitoring of the signal. If significant CRC errors start to be seen, the transmission path should be investigated further by using the eye and jitter displays. If errors occur every minute or every second, the system is approaching the digital cliff and significant CRC errors would be seen in the display.

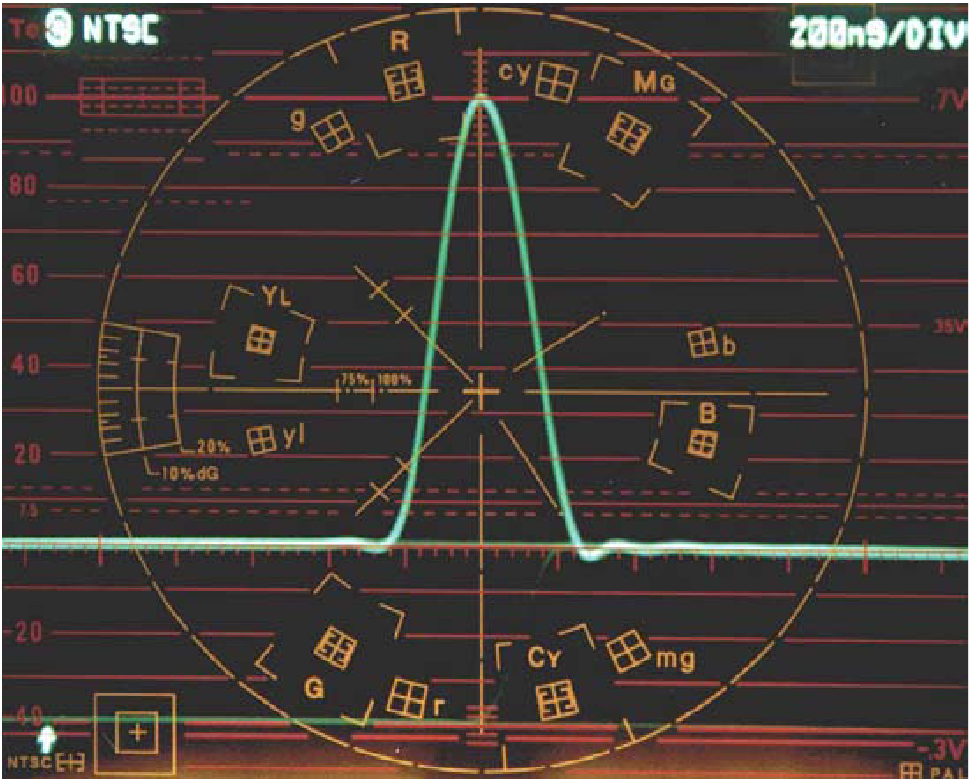

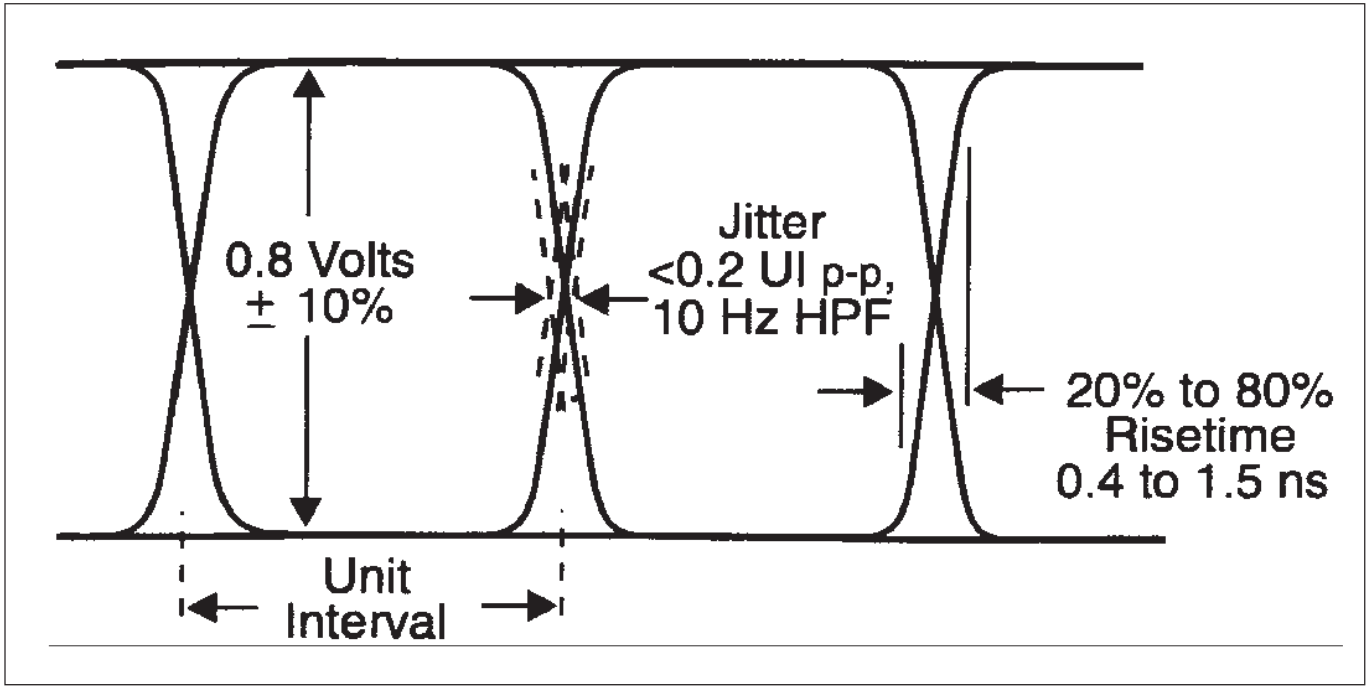

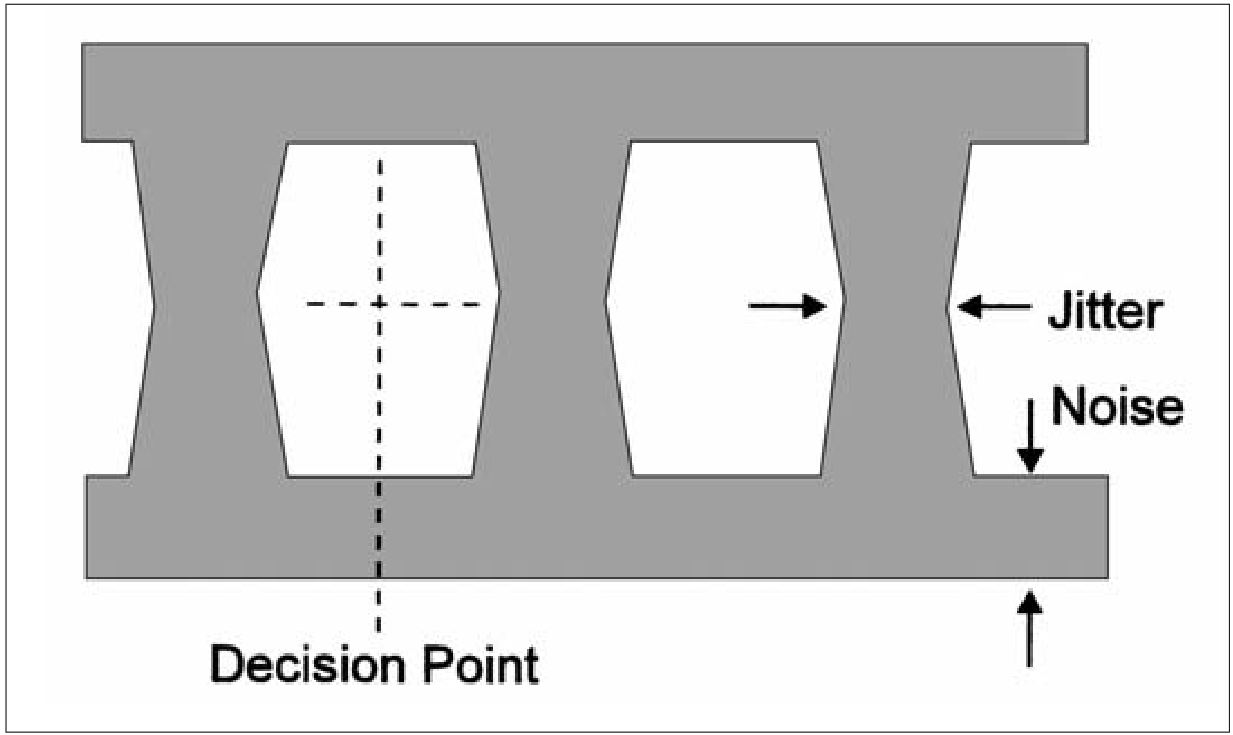

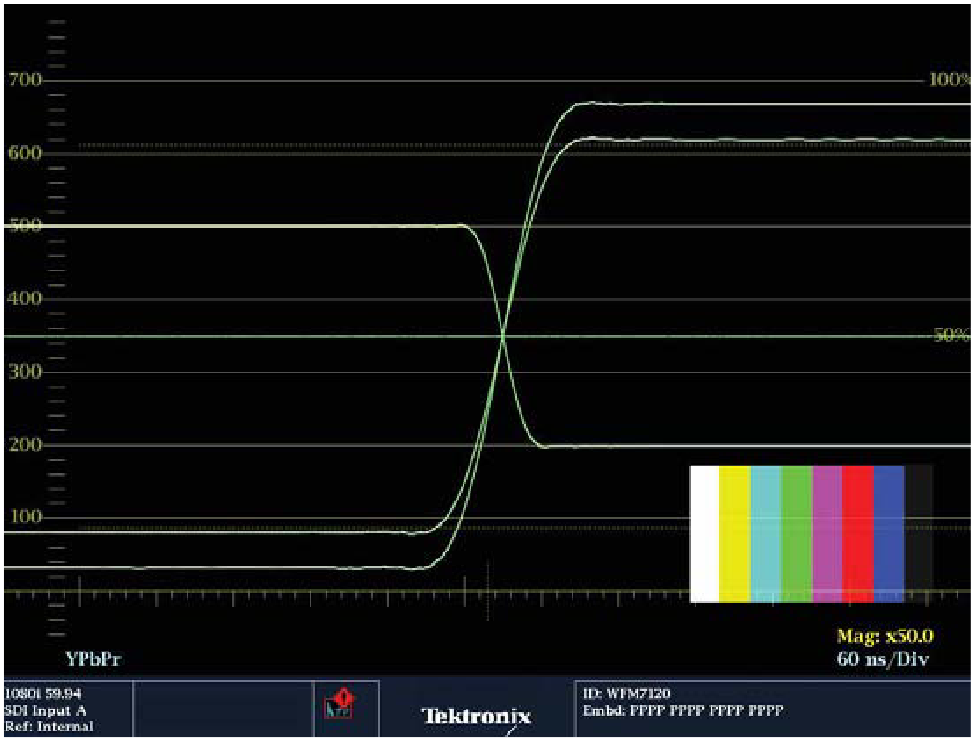

Eye-pattern testing

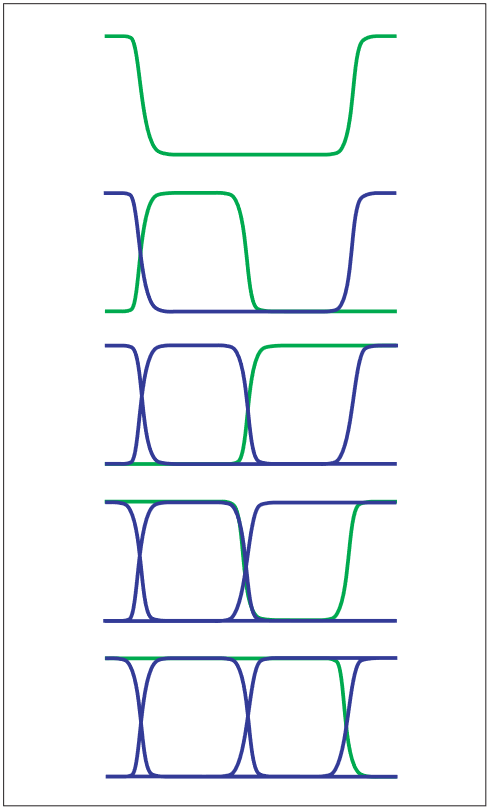

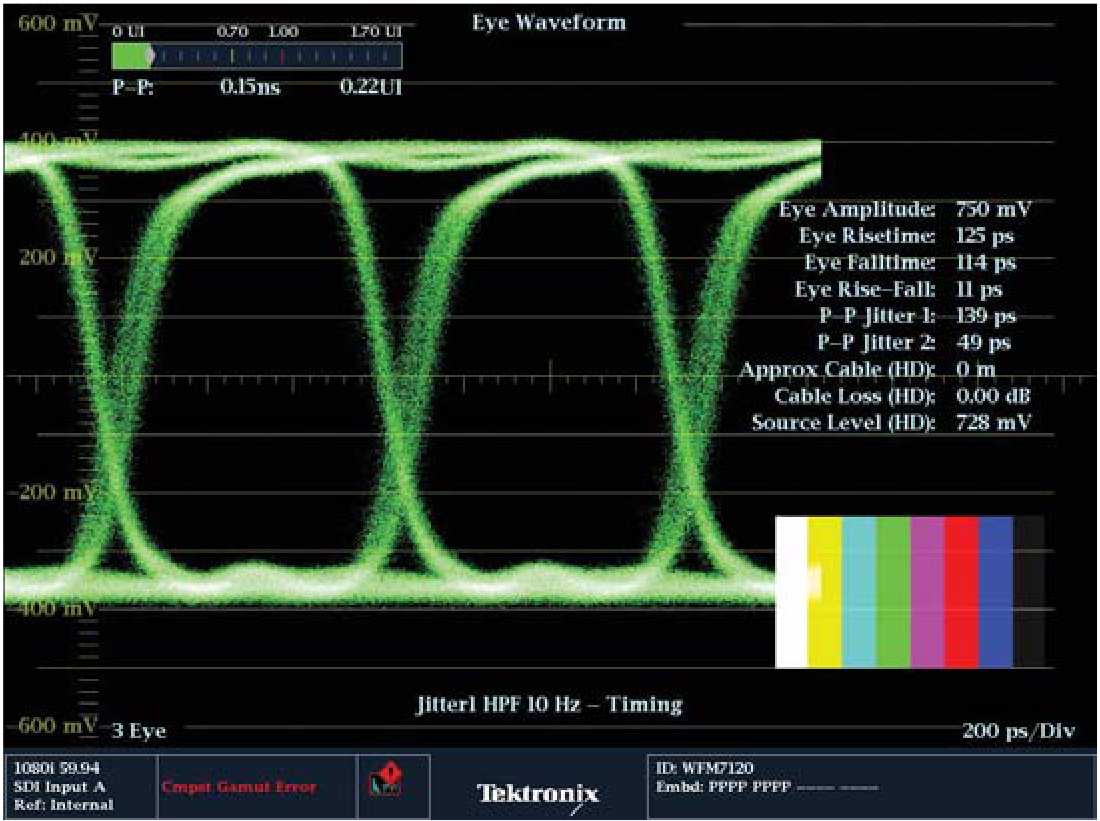

The eye pattern (Figures 15) is an oscilloscope view of the analog signal transporting the data. The signal highs and lows must be reliably detectable by the receiver to yield real-time data without errors. The basic parameters measured with the eye-pattern display are signal amplitude, risetime, and overshoot. Jitter can also be measured with the eye pattern if the clock is carefully specified. The eye pattern is viewed as it arrives, before any equalization. Because of this, most eye-pattern measurements will be made near the source, where the signal is not dominated by noise and frequency rolloff. Important specifications include amplitude, risetime, and jitter, which are defined in the standards, SMPTE259M, SMPTE292, and RP184. Frequency, or period, is determined by the television sync generator developing the source signal, not the serialization process. A unit interval (UI) is defined as the time between two adjacent signal transitions, which is the reciprocal of clock frequency. The unit interval is 3.7 ns for digital component 525 and 625 (SMPTE 259M) and 673.4 ps for Digital High Definition (SMPTE 292M). A serial receiver determines if the signal is a “high” or a “low” in the center of each eye, thereby detecting the serial data. As noise and jitter in the signal increase through the transmission channel, certainly the best decision point is in the center of the eye (as shown in Figure 16). Some receivers select a point at a fixed time after each transition point. Any effect which closes the eye may reduce the usefulness of the received signal. In a communications system with forward error correction, accurate data recovery can be made with the eye nearly closed. With the very low error rates required for correct transmission of serial digital video, a rather large and clean eye opening is required after receiver equalization. This is because the random nature of the processes that close the eye have statistical “tails” that would cause an occasional, but unacceptable error. Allowed jitter is specified as 0.2 UI. This is 740 ps for digital component 525 and 625 and 134.7 ps for digital high definition. Digital systems will work beyond this jitter specification, but will fail at some point. The basics of a digital system are to maintain a good-quality signal to keep the system healthy and prevent a failure which would cause the system to fall off the edge of the cliff. Signal amplitude is important because of its relationship to noise, and because the receiver estimates the required high-frequency compensation (equalization) based on the half-clock-frequency energy remaining as the signal arrives. Incorrect amplitude at the sending end could result in an incorrect equalization being applied at the receiving end, causing signal distortions. Rise-time measurements are made from the 20% to 80% points as appropriate for ECL logic devices. Incorrect rise time could cause signal distortions such as ringing and overshoot, or if too slow, could reduce the time available for sampling within the eye. Overshoot will likely be caused by impedance discontinuties or poor return loss at the receiving or sending terminations. Effective testing for correct receiving end termination requires a high-performance loop-through on the test instrument to see any defects caused by the termination under evaluation. Cable loss tends to reduce the visibility of reflections, especially at high-definition data rates of 1.485 Gb/s and above. High-definition digital inputs are usually terminated internally and in-service eye-pattern monitoring will not test the transmission path (cable) feeding other devices. Out-of-service transmission path testing is done by substituting a test signal generator for the source, and a waveform monitor with eye-pattern display in place of the normal receiving device. Eye-pattern testing requires an oscilloscope with a known response well beyond the transport layer data rate and is generally measured with sampling techniques. The Tektronix VM700T, WVR7120, and WFM7120/WFM6120 provide eye-pattern measurement capability for standard definition (270 Mb/s data) and the WVR7120 or WFM7120 allows eye-pattern measurements on high-definition 1.485 Gb/s data streams. These digital waveform monitors provide several advantages because they are able to extract and display the video data as well as measure it. The sampled eye pattern can be displayed in a three-data-bit overlay (3 Eye mode), to show jitter uncorrelated to the 10-bit/20-bit data word, or the display can be set to show ten bits for SD signals or twenty bits for high-definition signals of word-correlated data. By synchronizing the waveform monitor sweep to video line and field rates, it is easy to see any DC shift in the data stream correlated to horizontal or vertical video information.

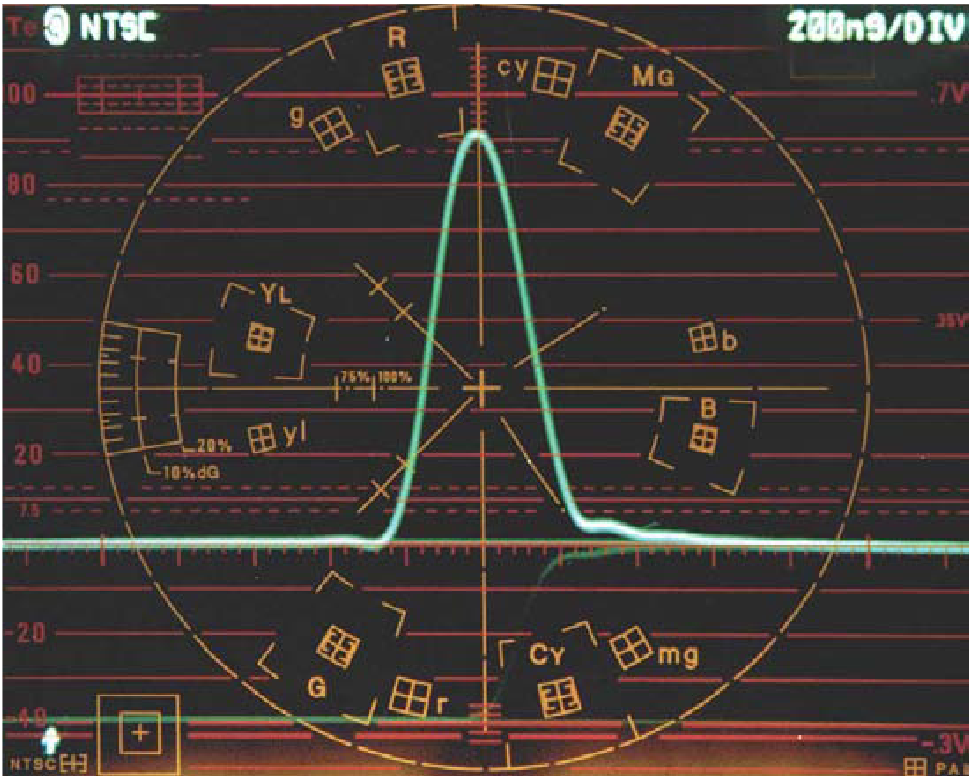

Understanding certain characteristics of the eye display can help in troubleshooting problems within the path of the signal. Proper termination within an HD-SDI system is even more critical because of the high clock rate of the signal. Improper termination will mean that not all of the energy will be absorbed by the receiving termination or device. This residual energy will be reflected back along the cable creating a standing wave. These reflections will produce ringing within the signal and the user will observe overshoot and undershoots on the eye display as shown in Figure 17. Note that this termination error by itself would not cause a problem in the signal being received. However, this error added cumulatively to other errors along the signal path will narrow the eye opening more quickly and decrease the receiver’s ability to recover the clock and data from the signal.

The eye display typically has the cross point of the transition in the middle of the eye display at the 50% point. If the rises time or fall time of the signal transitions are unequal then the eye display will move away from the 50% point depending on the degree of inequality between the transitions. AC-coupling within a device will shift the high signal level closer to the fixed-decision threshold reducing noise margin. Typically, SDI signals have symmetric rise and fall times, but asymmetric line drivers and optical signal sources (lasers) can introduce non-symmetric transitions as shown in Figure 18. While significant, these source asymmetries do not have especially large impacts on signal rise and fall times. In particular, cable attenuation will generally have a much larger impact on signal transition times. Without appropriate compensation or other adjustments, asymmetries in SDI signals can reduce noise margins with respect to the decision threshold used in decoding and can lead to decoding errors.

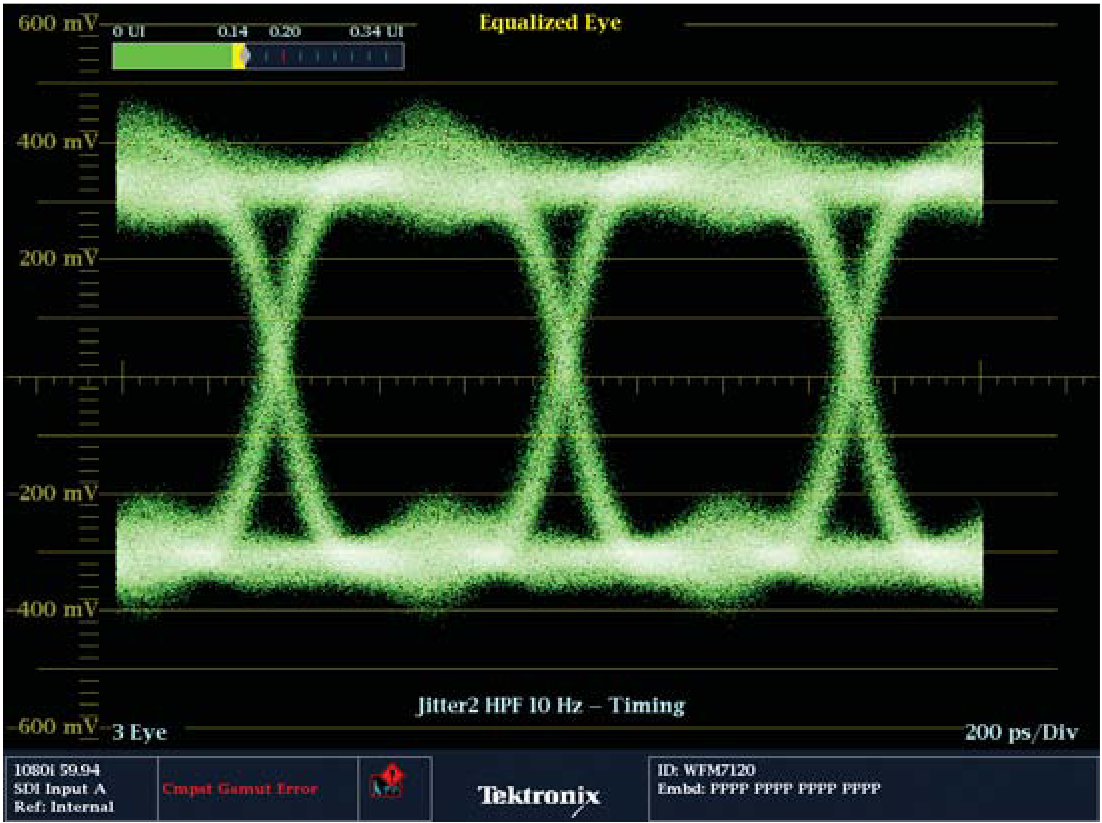

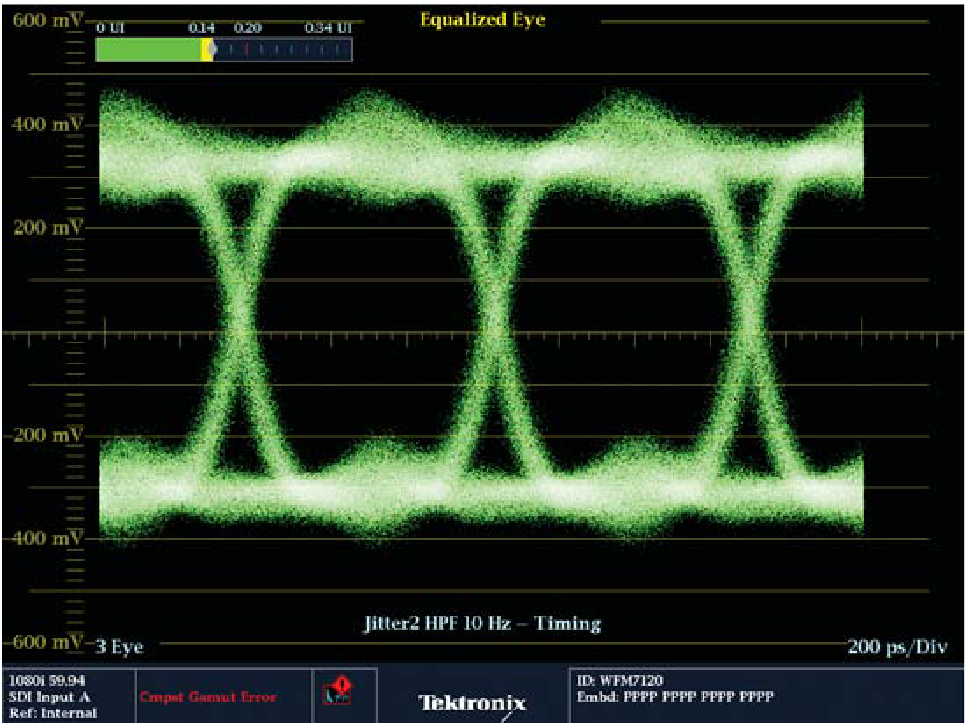

Adding lengths of cable between the source and the measurement instrument results in attenuation of the amplitude and frequency losses along the cable producing longer rise and fall time of the signal. With increase in cable length, the eye opening closes and is no longer clearly visible within the display. However, this signal is still able to be decoded correctly because the equalizer is able to recover the data stream. When the SDI signal has been degraded by using a long length of cable as in Figure 77, the eye opening is no longer clearly visible. In this case the equalized eye mode on the WFM7120/WFM6120 will allow the user to observe the eye opening after the equalizer has performed correction to the signal as shown in Figure 19. Therefore, it is likely that a receiver with a suitable adaptive equalizer will be able to recover this signal. However, it should be remembered that not all receivers use the same designs and there is a possibility that some device may still not be able to recover the signal. If the equalizer within the instrument is able to recover data, the equalized eye display should be open. If this display is partially or fully closed then the receiver is going to have to work harder to recover the clock and data. In this case there is more potential for data errors to occur in the receiver. Data errors can produce sparkle effects in the picture, line drop outs or even frozen images. At this point, the receiver at the end of the signal path is having problems extracting the clock and data from the SDI signal. By maintaining the health of the physical layer of the signal, we can ensure that these types of problems do not occur. The Eye and Jitter displays of the instrument can help troubleshoot these problems.

Jitter testing

Since there is no separate clock provided with the video data, a sampling clock must be recovered by detecting data transitions. This is accomplished by directly recovering energy around the expected clock frequency to drive a high-bandwidth oscillator (i.e., a 5 MHz bandwidth 270 MHz oscillator for SD signals) locked in near-real-time with the incoming signal. This oscillator then drives a heavily averaged, low-bandwidth oscillator (i.e., a 10 Hz bandwidth 270 MHz oscillator for SD signals). In a jitter measurement instrument, samples of the high- and low-bandwidth oscillators are then compared in a phase demodulator to produce an output waveform representing jitter. This is referred to as the “demodulator method.” Timing jitter is defined as the variation in time of the significant instances (such as zero crossings) of a digital signal relative to a jitter-free clock above some low frequency (typically 10 Hz). It would be preferable to use the original reference clock, but it is not usually available, so the heavily averaged oscillator in the measurement instrument is often used. Alignment jitter, or relative jitter, is defined as the variation in time of the significant instants (such as zero crossings) of a digital signal relative to a hypothetical clock recovered from the signal itself. This recovered clock will track in the signal up to its upper clock recovery bandwidth, typically 1 kHz for SDI and 100 kHz for HD signals. Measured alignment jitter includes those terms above this frequency. Alignment jitter shows signal-to-latch clock timing margin degradation.

Tektronix instruments such as the WFM6120 (Figure 20), WFM7120 and VM700T provide a selection of high-pass filters to isolate jitter energy. Jitter information may be unfiltered (the full 10 Hz to 5 MHz bandwidth) to display Timing Jitter, or filtered by a 1 kHz (–3 dB) high-pass filter to display 1 kHz to 5 MHz Alignment Jitter. Additional high-pass filters may be selected to further isolate jitter components. These measurement instruments provide a direct readout of jitter amplitude and a visual display of the demodulated jitter waveform to aid in isolating the cause of the jitter. It is quite common for a data receiver in a signal path to tolerate jitter considerably in excess of that specified by SMPTE recommendations but the build-up of jitter (jitter growth) through multiple devices could lead to unexpected failure. Jitter in bit-serial systems is discussed in SMPTE RP184, EG33, and RP192.

Jitter within the SDI signal will change the time when a transition occurs and cause a widening of the overall transition point. This jitter can cause a narrowing or closing of the eye display and make the determination of the decision threshold more difficult. It is only possible to measure up to one unit interval of jitter within the eye display by the use of cursors manually or by automated measurement readout. It can also be difficult within the eye display to determine infrequently occurring jitter events because the intensity of these events will be more difficult to observe compared to the regular repeatable transitions within the SDI signal.

Within the WFM7120 and WFM6120 EYE option, a jitter readout is provided within the eye display. The readout provides a measurement in both unit intervals and time. For an operational environment a jitter thermometer bar display provides simple warning of an SDI signal exceeding a jitter as shown in Figure 21. When the bar turns red it can alert the user to a potential problem in the system. This threshold value is user selectable and can be set at a defined limit by the user.

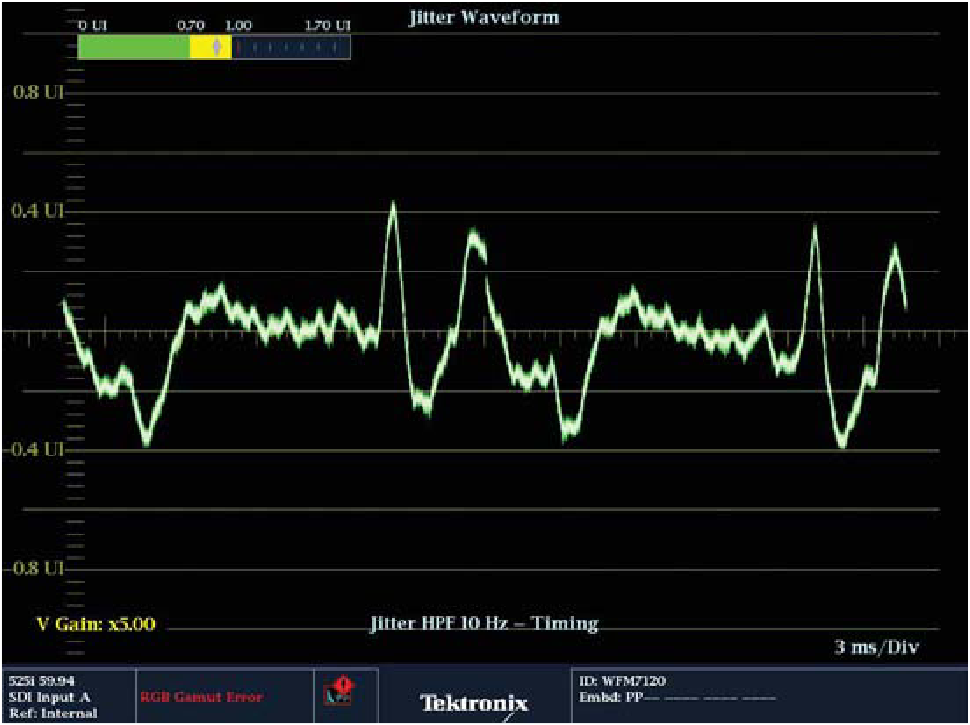

To characterize different types of jitter, the jitter waveform display available with the PHY option on the WFM6120 and WFM7120 allows a superior method to investigate jitter problems within the signal than the eye display and jitter readout. The jitter waveform can be displayed in a one-line, two-line, one-field or two-field display related to the video rate. When investigating jitter within the system it is useful to select the two-field display and increase the gain within the display. A small amount of jitter is present within all systems but the trace should be a horizontal line. Increasing the gain to ten times will show the inherent noise within the system. This should be random in nature, if not then there is likely to be a deterministic component of jitter present within the signal.

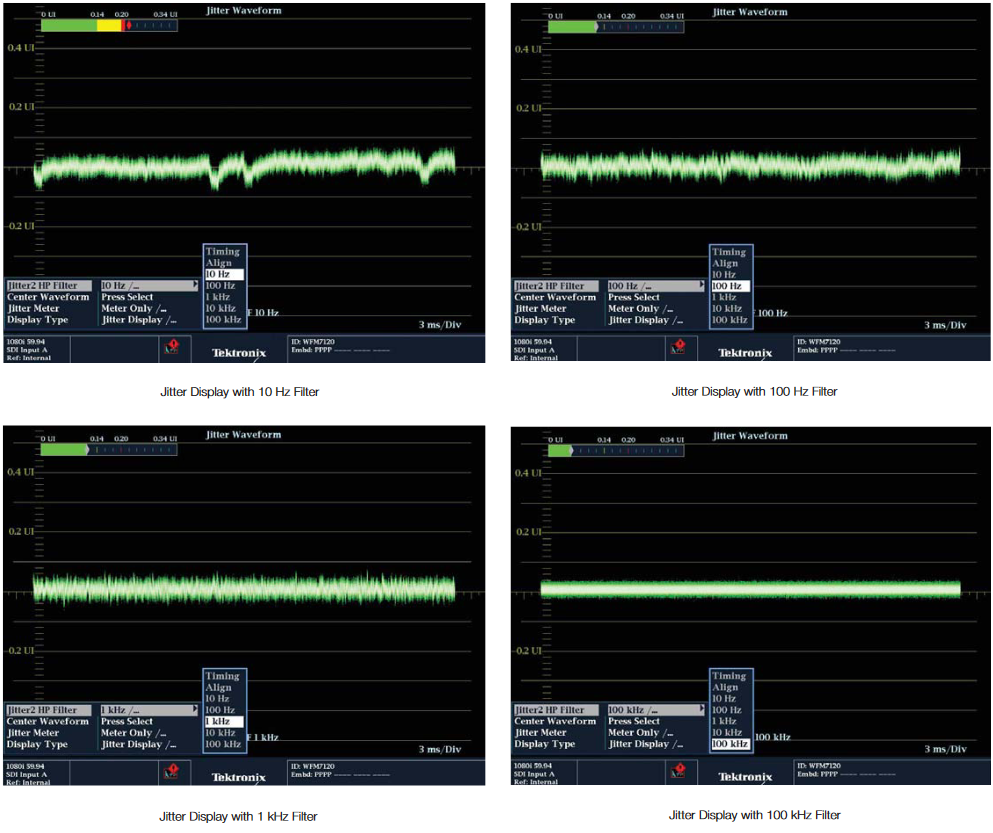

Within the instrument, one can apply 10 Hz, 100 Hz, 1 kHz, 10 kHz and 100 kHz filters for the measurement. These can aid in the isolation of jitter frequency problems. In this example as shown in Figure 22, different filters were used and the direct jitter readout and jitter waveform display are shown. With the filter set to 10 Hz the measurement of jitter was 0.2UI and there are disturbances to the trace at field rates. There are also some occasional vertical shifts in the trace when viewed on the waveform display. This gives rise to larger peak to peak measurements than actually measured from the display itself. When a 100 Hz filter is applied some of the components of jitter are reduce and the vertical jumping of the trace is not present, giving a more stable display. The measurement now reads 0.12UI, the disturbances at field rate are still present however. Application of the 1 kHz reduces the components of jitter and the trace is more of a flat line, the presence of the disturbances at field rate can still be observed and are still present. The jitter readout did not drop significantly between the 100 Hz and 1 kHz filter selections. With the 100 kHz filter applied the display now shows a flat trace and the jitter readout is significantly lower at 0.07UI. In this case, the output of the device is within normal operating parameters for this unit and provides a suitable signal for decoding of the physical layer. Normally, as the bandpass gets narrower and the filter selection is increased, you will expect the jitter measurement to become smaller as in this case. Suppose that as the filter value is increased and the band-pass bandwidth narrowed that the jitter readout actually increased. What would this mean was occurring in the SDI signal?

In this case, an explanation of these measurement results could indicate that a pulse of jitter was present within the signal and this pulse of jitter was within the band-pass edge of one of the filter selections. Instead of this component being removed by the filter selection it was actually differentiated, producing a ringing at the rising and falling transitions of the pulse producing a larger value of jitter within the bandwidth filter selection.

By use of these filter selections, the user can determine within which frequency band the jitter components are present. Most of the frequency components present will be multiples of the line or field rate and can be helpful in understanding which devices produce significant amounts of jitter within the SDI transmission path. Typically, the Phase Lock Loop (PLL) design of the receiver will pass through low frequency of jitter from input to output of the device as the unit tracks the jitter present within the input to the device. High-frequency jitter components are more difficult for the PLL to track and can cause locking problems in the receiver.

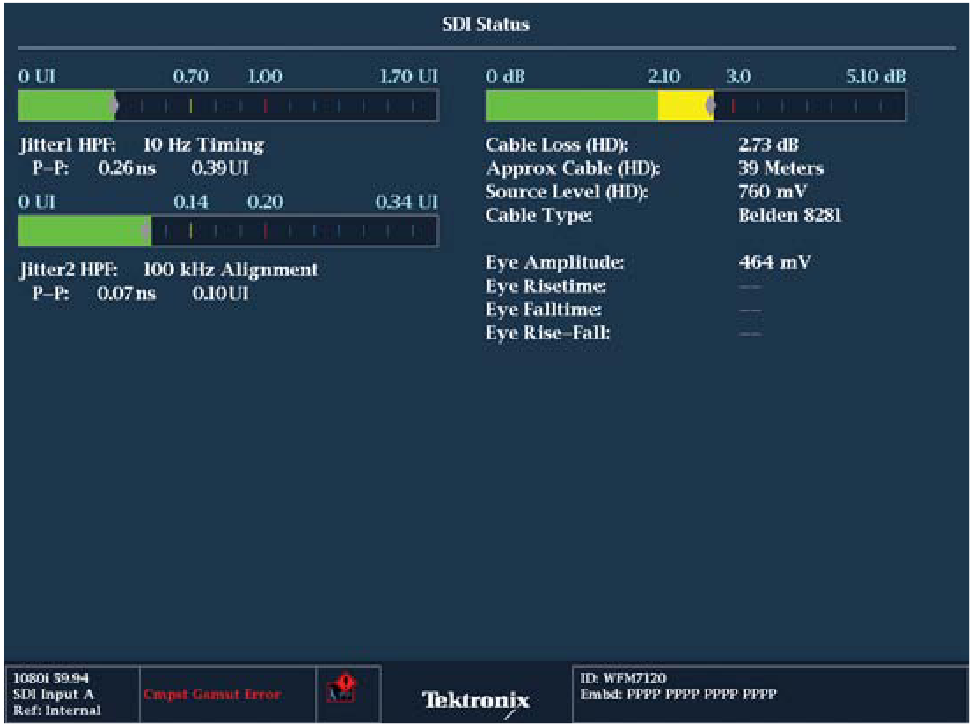

SDI status display

The SDI Status display provides a summary of several SDI physical layer measurements as shown in Figure 23. Within the WFM7120/ 6120 and WVR7120 with the Eye option it is possible to configure the two jitter readouts to show Timing and Alignment jitter simultaneously by configuring tiles one and two for Timing jitter and tiles three and four for Alignment jitter. The instruments will automatically change the filter setting for alignment jitter between HD (100kHz) and SD (1kHz) depending on the type of SDI signal applied. Additionally, a cable-length estimation measurement bar is also shown within the SDI Status display. If the unit has the PHY option, automatic measurements of eye amplitude, eye risetime, eye falltime and eye rise-fall are made by the instrument. These automatic measurement provide a more accurate and reliable method of measuring the physical layer.

Cable-length measurements

The cable-length measurement is useful to quantify equipment operational margin within a transmission path. Most manufacturers specify their equipment to work within a specified range using a certain type of cable. For instance [Receiver Equalization Range - Typically SD: to 250m of type 8281 cable HD: to 100m of type 8281 cable.] As shown in this example the cable type specified is 8281 cable. However, throughout your facility a different type of cable may be used. In this case, set the waveform monitor to the cable type specified by the equipment manufacturer and then measure the cable length. If the reading from the instrument is 80 meters, we known that this piece of equipment will work to at least 100 meters and have 20 meters of margin within this signal path. If the measurement was above 100 meters then we would have exceed the manufacturers recommendation for the device. Manufacturers specify their equipment to one of the most popular cable types and it is not necessary to have that specific type of cable used in your facility when making this measurement. The WFM7120 and WFM6120 support the following cable types which are typically used within specifications (Belden 8281, 1505, 1695A, 1855A, Image 1000 and Canare L5-CFB). Simply select the appropriate cable type from the configuration menu for the physical layer measurement. Once the cable type has been selected, apply the SDI signal to the instrument and it will provide measurements of Cable Loss, Cable Length and Estimated Source Signal Level.

- Cable Loss shows the signal loss in dB (deciBels) along the cable length. The value of 0dB indicates a good 800mV signal whereas a value of -3dB would indicates a source with 0.707 of the expected amplitude. If we assume that the launch amplitude of the signal was 800mv then the amplitude of the signal at the measurement location would be approximately 565mv.

- Cable Length indicates the length of the cable between the source signal and the waveform monitor. The instrument calculates the cable length based on the signal power at the input and the type of cable selected by the user.

- Source Level shows the calculated launch amplitude of the signal source, assuming a continuous run of cable, based on the specified type of cable selected by the user.

These types of measurements can be particularly useful when qualifying a system and verify equipment performance. By knowing the performance specification of the equipment, the user can gauge if the device is operating within the allowable range. For instance, if the instrument is measuring 62 meters for the cable length of the signal as shown in Figure 89, then the user can compare this measurement with the operating margin for the equipment which stated that the equalization range of the device will operate to at least 100m of Belden 8281 cable. Therefore, the signal path has 38 meters of margin for the operation of this device. Remember that this measurement assumes a continuous run of cable. In some case this measurement may have been made with a number of active devices within the signal path. If this is the case then each link in turn should be measured separately with a test signal source applied at one end of the cable and the measurement device at the other end. This will give a more reliable indication of the measurement of cable length within each part of the system and ensure that the system has sufficient headroom between each signal path. If the transmission distance exceeds the maximum length specified by the cable manufacturer, then additional active devices need to be inserted within the signal path to maintain the quality of the signal.

Timing between video sources

In order to transmit a smooth flow of information, both to the viewer and to the system hardware handling the signal, it is necessary that any mixed or sequentially switched video sources be in step at the point they come together. Relative timing between serial digital video signals that are within an operational range for use in studio equipment may vary from several nanoseconds to a few television lines. This relative timing can be measured by synchronizing a waveform monitor to an external source and comparing the relative positions of known picture elements.

Measurement of the timing differences in operational signal paths may be accomplished using the Active Picture Timing Test Signal available from the TG700 Digital Component Generator in conjunction with the timing cursors and line select of an externally referenced WFM6120 or WFM7120 series serial component waveform monitor. The Active Picture Timing Test Signal will have a luminance white bar on the following lines:

- 525-line signals: Lines 21, 262, 284, and 525

- 625-line signals: Lines 24, 310, 336, and 622

- 1250-, 1125-, and 750-line formats: first and last active lines of each field

To set relative timing of signal sources such as cameras, telecines, or video recorders, it may be possible to observe the analog representation of the SAV timing reference signal, which changes amplitude as vertical blanking changes to active video. The waveform monitor must be set to “PASS” mode to display an analog representation of the timing reference signals, and be locked to an external synchronizing reference (EXT REF).

Interchannel timing of component signals

Timing differences between the channels of a single component video feed will cause problems unless the errors are very small. Signals can be monitored in the digital domain, but any timing errors will likely be present from the original analog source. Since analog components travel through different cables, different amplifiers in a routing switcher, etc., timing errors can occur if the equipment is not carefully installed and adjusted. There are several methods for checking the interchannel timing of component signals. Transitions in the color bar test signal can be used with the waveform method described below. Tektronix component waveform monitors, however, provide two efficient and accurate alternatives: the Lightning display, using the standard color bar test signal; and the bowtie display, which requires a special test signal generated by Tektronix component signal generators.

Waveform method

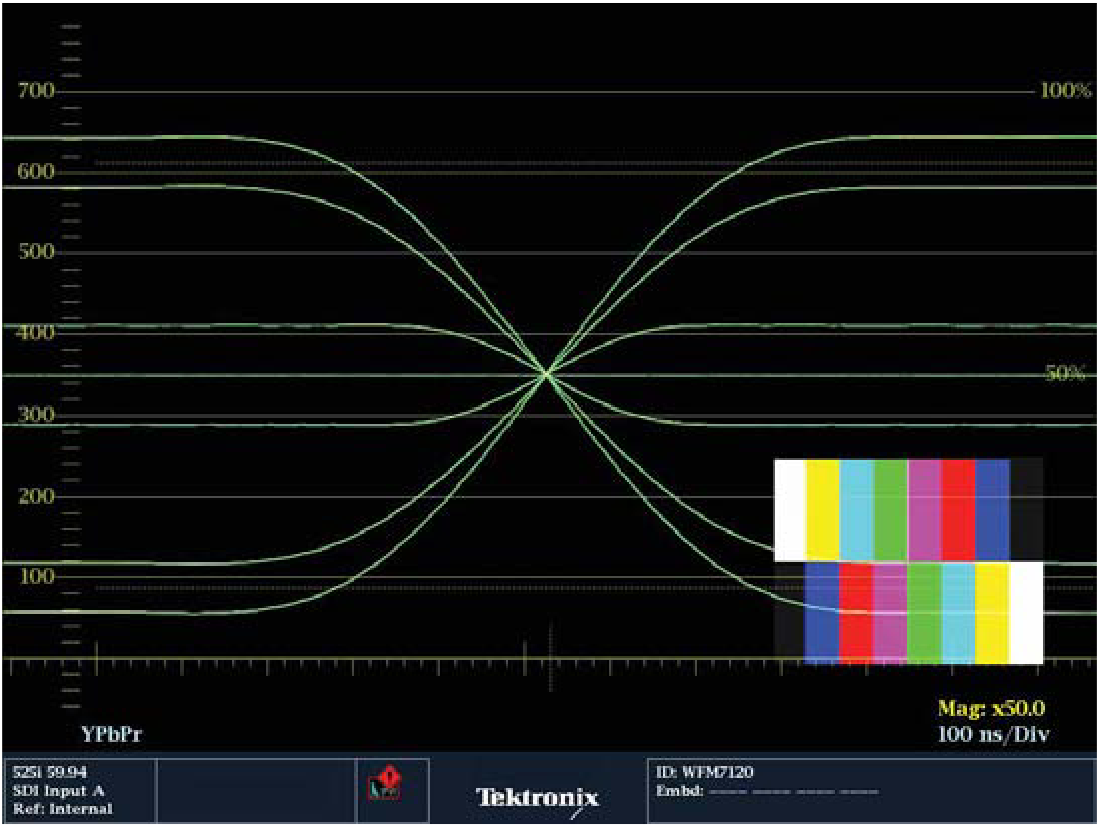

The waveform technique can be used with an accurately calibrated three-channel waveform monitor to verify whether transitions in all three channels are occurring at the same time. For example, a color bar signal has simultaneous transitions in all three channels at the boundary between the green and magenta bars (Figure 24).

To use the waveform method to check whether the green-magenta transitions are properly timed:

- Route the color bar signal through the system under test and connect it to the waveform monitor.

- Set the waveform monitor to PARADE mode and 1 LINE sweep.

- Vertically position the display, if necessary, so the midpoint of the Channel 1 green-magenta transition is on the 350 mV line.

- Adjust the Channel 2 and Channel 3 position controls so the zero level of the color-difference channels is on the 350 mV line. (Because the color-difference signals range from – 350 mV to +350 mV, their zero level is at vertical center.)

- Select WAVEFORM OVERLAY mode and horizontal MAG.

- Position the traces horizontally for viewing the proper set of transitions. All three traces should coincide on the 350 mV line.

The Tektronix TG700 and TG2000 test signal generators can be programmed to generate a special reverse bars test signal, with the color bar order reversed for half of each field. This signal makes it easy to see timing differences by simply lining up the crossover points of the three signals. The result is shown in Figure 25.

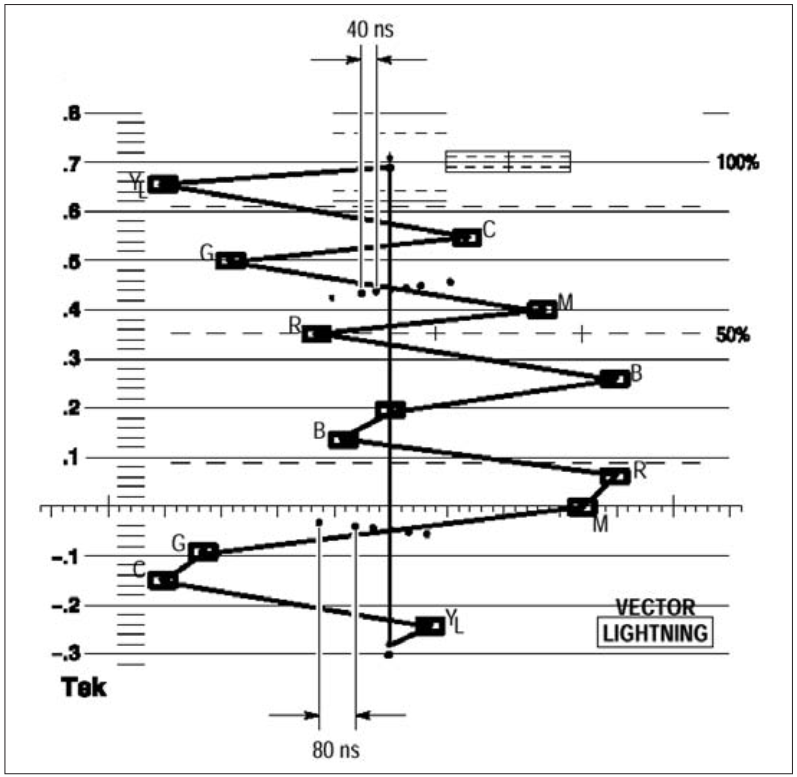

Timing using the Tektronix Lightning display

The Tektronix Lightning display provides a quick, accurate check of interchannel timing. Using a color bar test signal, the Lightning display includes graticule markings indicating any timing errors. Each of the Green/Magenta transitions should pass through the center dot in the series of seven graticule dots crossing its path. Figure 26 shows the correct timing.

The closely spaced dots provide a guide for checking transitions. These dots are 40 ns apart while the widely spaced dots represent 80 ns. The electronic graticule eliminates the effects of CRT nonlinearity. If the color-difference signal is not coincident with luma, the transitions between color dots will bend. The amount of this bending represents the relative signal delay between luma and color-difference signal. The upper half of the display measures the Pb to Y timing, while the bottom half measures the Pr to Y timing. If the transition bends in towards the vertical center of the black region, the color-difference signal is delayed with respect to luma. If the transition bends out toward white, the color-difference signal is leading the luma signal.

Bowtie method

The bowtie display requires a special test signal with signals of slightly differing frequencies on the chroma channels than on the luma channel. For standard definition formats, a 500 kHz sine-wave packet might be on the luma channel and a 502 kHz sine-wave packet on each of the two chroma channels (Figure 27). Other frequencies could be used to vary the sensitivity of the measurement display.

Higher packet frequencies may be chosen for testing high-definition component systems. Markers generated on a few lines of the luma channel serve as an electronic graticule for measuring relative timing errors. The taller center marker indicates zero error, and the other markers are spaced at 20 ns intervals when the 500 kHz and 502 kHz packet frequencies are used. The three sine-wave packets are generated to be precisely in phase at their centers. Because of the frequency offset, the two chroma channels become increasingly out of phase with the luma channel on either side of center.

The waveform monitor subtracts one chroma channel from the luma channel for the left half of the bowtie display and the second chroma channel from the luma channel for the right half of the display. Each subtraction produces a null at the point where the two components are exactly in phase (ideally at the center). A relative timing error between one chroma channel and luma, for example, changes the relative phase between the two channels, moving the null off center on the side of the display for that channel. A shift of the null to the left of center indicates the colordifference channel is advanced relative to the luma channel. When the null is shifted to the right, the color-difference signal is delayed relative to the luma channel.

The null, regardless of where it is located, will be zero amplitude only if the amplitudes of the two sine-wave packets are equal. A relative amplitude error makes the null broader and shallower, making it difficult to accurately evaluate timing. If you need a good timing measurement, first adjust the amplitudes of the equipment under test. A gain error in the luma (CH1) channel will mean neither waveform has a complete null. If the gain is off only in Pb (CH2), the left waveform will not null completely, but the right waveform will. If the gain is off only in Pr (CH3) the right waveform will not null completely, but the left waveform will.